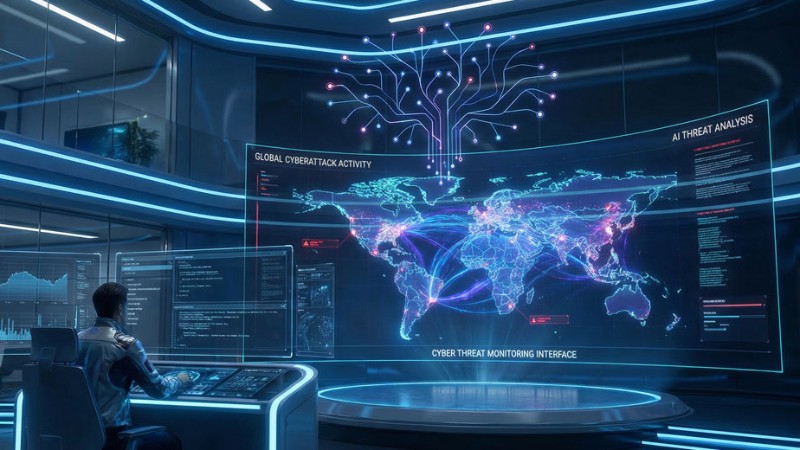

Microsoft Warns of Hackers Supercharging Cyberattacks With AI

New research from Microsoft warns organizations that threat actors have begun integrating artificial intelligence (AI) into their workflows to accelerate tradecraft, bypass safeguards, and conduct malicious activity.

Just as the cyber underworld adopted business practices from legitimate businesses — corporate-style organizational structures, as-a-service models, and specialization — it is emulating enterprises that integrate AI into their operations to increase the speed, scale, and resilience of malevolent operations, Microsoft explained in its security blog.

It noted that Microsoft’s threat intelligence unit has observed that most malicious AI use today centers on language models producing text, code, or media. Threat actors use generative AI to draft phishing lures, translate content, summarize stolen data, generate or debug malware, and scaffold scripts or infrastructure.

“AI is rapidly becoming embedded across the entire cyberattack lifecycle, but not always in the ways people expect,” said Ensar Seker, CISO of SOCRadar, a threat intelligence company in Newark, Del.

“In many cases, threat actors are not building their own advanced AI models,” he told TechNewsWorld. “Instead, they are operationalizing existing generative AI tools to accelerate traditional attacker workflows.”

“The biggest impact of AI in cyber operations is efficiency rather than completely new attack techniques,” he added.

“However,” he continued, “AI does not replace traditional attacker tradecraft or eliminate the need for human expertise. Sophisticated campaigns, especially those conducted by nation-state groups, still rely heavily on manual reconnaissance, custom tooling, and operational security discipline.”

“AI is acting more as a force multiplier than a replacement for established tactics,” he said. “Threat actors still need access, infrastructure, and a clear objective. AI simply helps them move faster once those elements are in place.”

AI Speeds Attack Preparation

“Think about where threat actors used to spend their time,” observed Stu Bradley, senior vice president for risk, fraud, and compliance solutions at SAS, an analytics and AI software company in Cary, N.C. “Finding and researching their victims, drafting convincing phishing messages, developing a relationship over weeks or months to get that romance scam payout.”

“With the use of readily available AI tools, those once time-intensive tasks are being streamlined and automated,” he told TechNewsWorld. “GenAI enables fraudsters to produce polished, targeted content in seconds — content that previously would have taken hours to craft. As a result, the gap between finding a victim, or victims, as is often the case, and launching an attack keeps shrinking.”

“And because criminals also use these AI tools to automate their attacks, they can also target many more victims at once, with much less manual effort,” he said. “The impact is staggering when you consider that most fraud schemes are a numbers game. You won’t hit every time, but the more often you try, the better your odds.”

Threat actors leverage AI to fully automate the manual, time-consuming stages of the cyber kill chain, added Eric Schwake, director of cybersecurity strategy at Salt Security, an API security provider in Palo Alto, Calif.

“They use generative AI to quickly perform reconnaissance, write and debug malicious code, and create highly targeted phishing schemes in seconds,” he told TechNewsWorld. “By reducing the time needed to develop and deploy an exploit, adversaries can strike faster than traditional security measures or human analysts can respond.”

Force Multiplier

Microsoft noted that its threat intelligence unit has observed threat actors using AI in the operational aspects of their activities, which are not inherently malicious but materially support their broader objectives. In these cases, AI is applied to improve the efficiency, scale, and sustainability of operations, not to directly execute attacks.

“AI allows threat actors to run more of the attack lifecycle in parallel,” explained Jacob Krell, senior director for secure AI solutions and cybersecurity at Suzu Labs, in Las Vegas, a provider of AI-powered cybersecurity services.

“Reconnaissance, persona development, phishing lure generation, infrastructure setup, and post-compromise data triage can all be performed faster and across more targets at once,” he told TechNewsWorld. “What previously required multiple specialists can now be compressed into a repeatable workflow.”

AI solves the headcount problem for these organized criminal rings, SAS’s Bradley added. “You no longer need a large crew to run a large operation,” he explained. “The automation of AI content generation means they can flood email, SMS, voice, and social channels simultaneously with personalized messages that pass the sniff test much more convincingly than scams of the past.”

AI functions as a powerful force multiplier, enabling a single attacker to coordinate thousands of simultaneous intrusions, Salt Security’s Schwake noted. “Attackers are using AI to automate target recognition, password spraying, and the rapid setup of attack infrastructure, such as look-alike domains,” he said. “This ability allows relatively inexperienced actors to execute highly complex campaigns across a global attack surface without a commensurate increase in human resources.”

AI Helps Attackers Rebuild Faster

Microsoft also pointed out that threat actors are exploiting AI for resource development. With AI models, it explained, threat actors can design, configure, and troubleshoot their covert infrastructure. This method reduces the technical barrier for less sophisticated actors and accelerates the deployment of resilient infrastructure while minimizing the risk of detection.

“AI enables more adaptive and evasive tooling,” observed Vincenzo Iozzo, CEO and co-founder of SlashID, an identity threat detection and response provider in Chicago.

“AI-generated malware can be polymorphic, rewriting its own code to evade signature-based detection,” he told TechNewsWorld. “AI also allows threat actors to rapidly iterate on campaigns that get blocked — regenerating payloads, rotating infrastructure, and adjusting social engineering lures faster than defenders can respond.”

AI improves resilience by shortening the rebuild cycle for threat actors, added Suzu Labs’ Krell. “When a payload, lure, or infrastructure component is detected, threat actors can use AI to rapidly rework code, refresh phishing content, troubleshoot deployment issues, and reimplement functionality using different libraries or languages,” he explained.

“Command and control callback locations are also rotating more frequently than manual operations would allow, which compresses the window defenders have to act on flagged indicators,” he added.

Rise of Agentic AI in Cybercrime

While generative AI currently accounts for most of the observed threat actor activity involving AI, Microsoft noted that its threat researchers are beginning to see early signals of a transition toward more agentic uses of AI.

For threat actors, this shift could represent a meaningful change in tradecraft by enabling semi-autonomous workflows that continuously refine phishing campaigns, test and adapt infrastructure, maintain persistence, or monitor open-source intelligence for new opportunities.

Microsoft has not yet observed large-scale use of agentic AI by threat actors, largely due to ongoing reliability and operational constraints, it continued. Nonetheless, real-world examples and proof-of-concept experiments illustrate the potential for these systems to support automated reconnaissance, infrastructure management, malware development, and post-compromise decision-making.

“What we are seeing today is early but meaningful experimentation,” Krell said. “Agentic AI is being used to support workflows that involve planning, tool use evaluation, and adaptation over time rather than one-off prompting.”

“Microsoft has reported [North Korean threat actor] Coral Sleet using agentic AI tools in an end-to-end workflow for lure development, infrastructure provisioning, and rapid payload testing and deployment,” he continued. “Large-scale use is still constrained by reliability and operational risk, but the direction is clear.”

“AI is not replacing threat actors,” he added. “It is making them more efficient.”

“The most immediate impact is not fully autonomous intrusions but faster research, faster adaptation, more scalable social engineering, and more sustainable misuse of legitimate access,” he said. “AI acceleration does not follow a linear path. Capabilities compound, and organizations that still think of this as a phishing-email problem are already underestimating the operational shift underway.”